SyncTwin: Fast Digital Twin Construction and Synchronization for Safe Robotic Manipulation

Video

video demo

Abstract

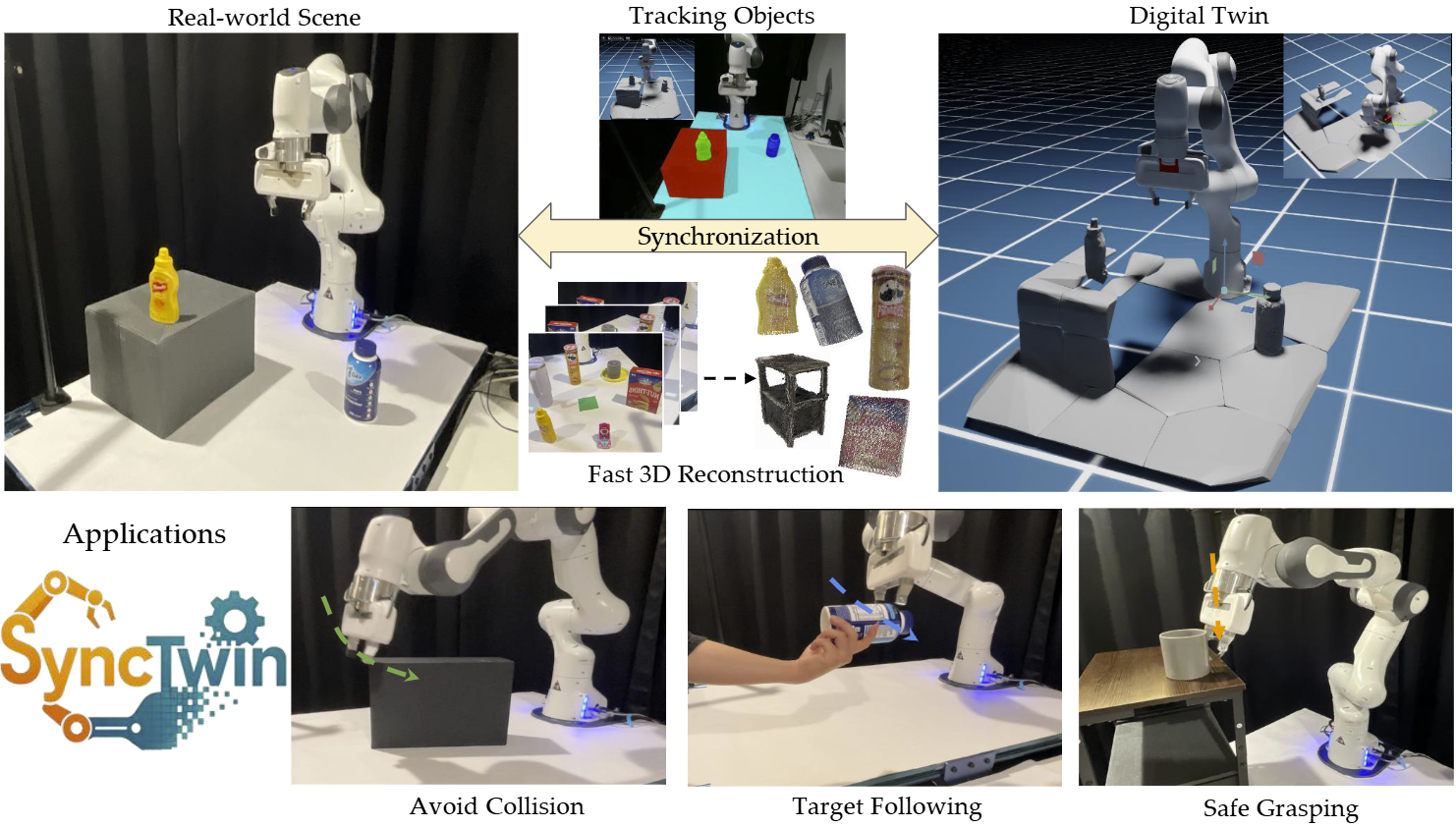

Accurate and safe robotic manipulation under dynamic and visually occluded conditions remains a core challenge in real-world deployment. We introduce SyncTwin, a novel digital twin framework that unifies fast 3D scene reconstruction and real-to-sim synchronization for robust and safety-aware robotic manipulation in such environments. In the offline stage, we employ VGGT to rapidly reconstruct object-level 3D assets from RGB images, forming a reusable geometry library. During execution, SyncTwin continuously synchronizes the digital twin by tracking real-world object states via point cloud segmentation updates and aligning them through colored-ICP registration. The synchronized twin enables motion planners to compute collision-free and dynamically feasible trajectories in simulation, which are safely executed on the real robot through a closed real-to-sim-to-real loop. Experiments in dynamic and occluded scenes show that SyncTwin improves manipulation performance and motion safety, demonstrating the effectiveness of digital twin synchronization for real-world robotic execution.

Motivation

Real-world safe manipulation is challenged by partial single-view perception and dynamic scenes with moving objects and occlusions, causing planners to operate on incomplete or outdated geometry and resulting in unsafe execution.

To overcome these limitations, a robot needs a continuously synchronized digital twin, one that mirrors the real world in real time and provides accurate geometry for planning.

Method

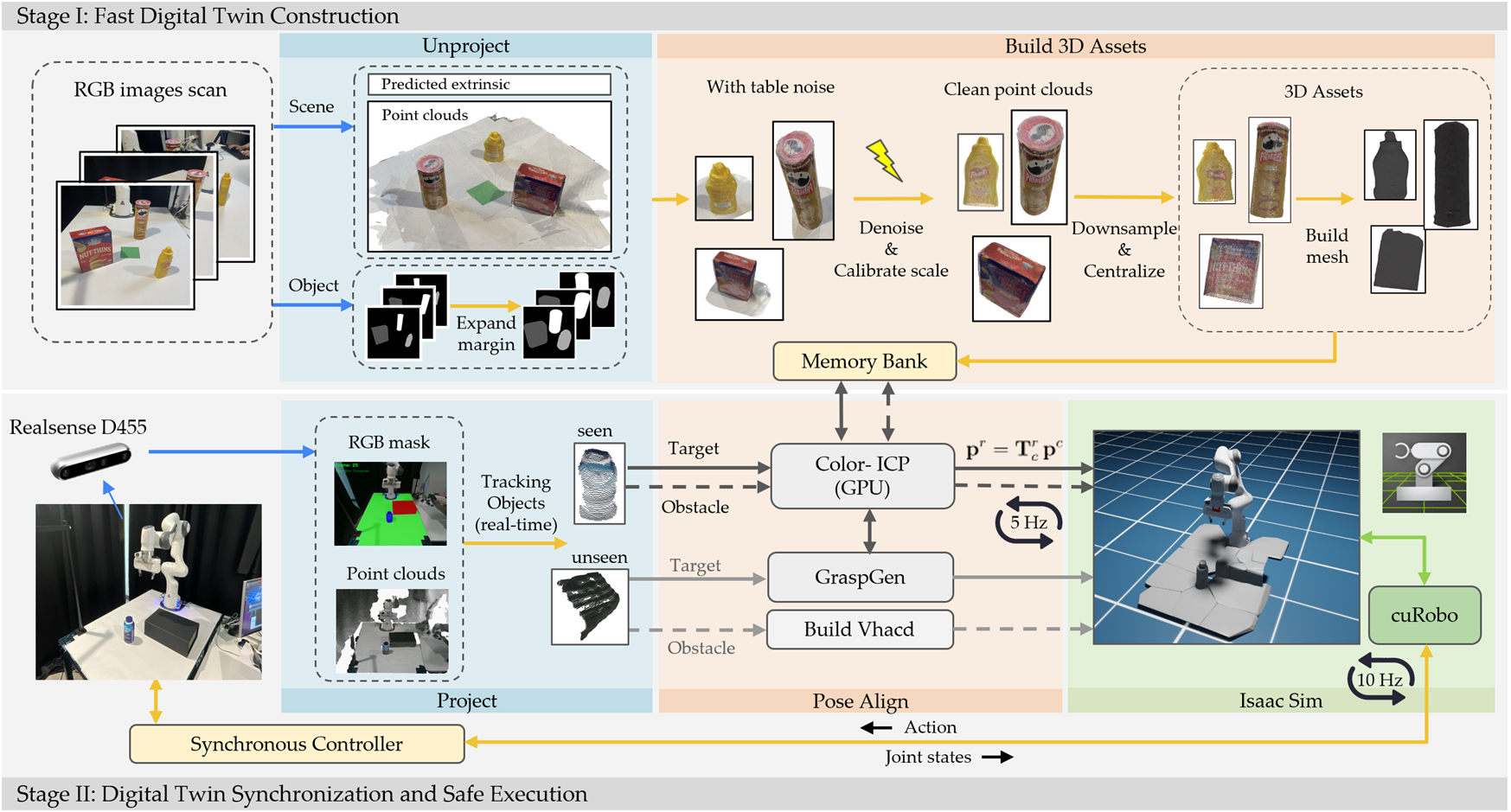

The full SyncTwin method consists of two stages. Stage I performs fast digital-twin construction. generates scene-level point clouds from RGB inputs. Object-level point clouds are extracted via projection, segmentation, and denoising, then converted into lightweight meshes and stored in a memory bank.Stage II performs online synchronization. A RealSense provides live RGB-D frames. After segmentation, partial point clouds are aligned to the memory-bank assets using colored ICP, and the updated poses are sent to Isaac Sim for MPC planning, closing the loop.

Stage I — Fast Digital Twin Construction

Here is a closer look at Stage I. VGGT generates a scene point cloud, then we detect projection intersections and segment object regions, but due to prediction error, the result still contains noise. To remove this noise, we introduce a geometric sphere-expansion method that identifies opening edges and cleanly separates the supporting plane. This produces clean point clouds, which we downsample, centralize, and finally convert into lightweight meshes stored in the memory bank.

Stage II — Online Digital Twin Synchronization

Stage II keeps the digital twin synchronized. We use a sliding-window memory to ensure temporally stable segmentation: the first frame saves an initial prompt, and subsequent frames update the memory representation. Each incoming frame is encoded together with the memory features and decoded by SAM, producing consistent masks even under occlusions. The resulting masks support real-time object tracking. The updated object states drive the MPC planner inside Isaac Sim, forming a continuous real-to-sim-to-real loop.

Experiments & Results

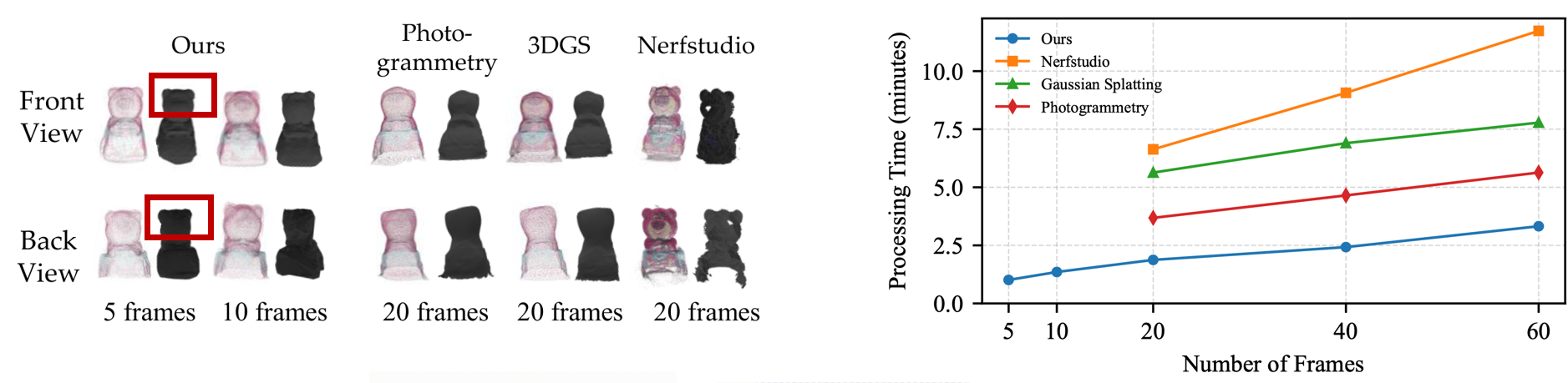

Experiment — Fast 3D Assets Reconstruction

We evaluate our fast 3D asset reconstruction across multiple baselines. As shown on the left, SyncTwin produces clean object geometry using only five to ten frames, while traditional methods such as Photogrammetry, 3D Gaussian Splatting, and Nerfstudio at least require 20 to 60 frames. SyncTwin reconstructs mesh in 1 to 2 minutes, significantly faster than all baselines. And our method preserves fine-grained geometric details (e.g., bear's ear shape remains well )

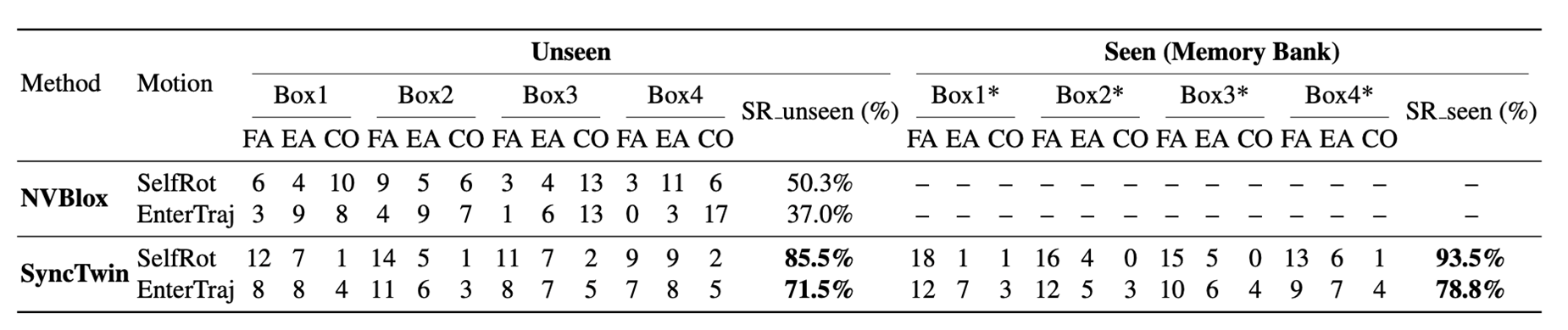

Experiment — Avoid Collision in the Real World

We next evaluate dynamic obstacle avoidance in real-world scenarios. On the left, using NVBlox, the robot often fails to avoid collisions because voxel-based geometry is incomplete and updates slowly. On the right, SyncTwin maintains accurate object geometry through real-time alignment, enabling the robot to safely avoid obstacles, even when they move during execution.

Quantitatively, SyncTwin substantially outperforms NVBlox. For unseen obstacles, we achieve up to 85.5% success in the self-rotation condition, and 71.5% in the enter-trajectory condition, compared to NVBlox at 50.3% and 37.0%. For seen objects stored in the memory bank, having full object geometry leads to even stronger results: SyncTwin reaches 93.5% and 78.8% success, respectively. These results validate the importance of using accurate, asset-level geometry for safety-critical planning.

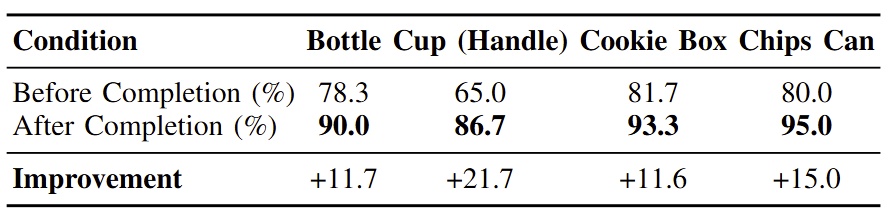

Experiment — Safe Grasping Under Single-View Occlusion

Finally, we demonstrate safe grasping under single-view occlusion. Without geometry completion, the robot sees only partial point clouds and often generates unsafe or failing grasps, especially for asymmetric objects such as cups with handles. With SyncTwin, the complete 3D asset from the memory bank replaces the partial observation, leading to more stable grasp candidates and significantly higher success rates. As shown in the table, performance improves by over 20% on challenging objects, enabling reliable grasping in real-world scenes.

BibTeX

@article{huang2026synctwin,

title={SyncTwin: Fast Digital Twin Construction and Synchronization for Safe Robotic Manipulation},

author={Huang, Ruopeng and Yang, Boyu and Gui, Wenlong and Morgan, Jeremy and Biyik, Erdem and Li, Jiachen},

journal={arXiv preprint arXiv:2601.09920},

year={2026}

}